Snowflake provides a scalable, secure, and cost-effective solution for storing and analyzing large volumes of data in real-time. However, choosing the appropriate Snowflake warehouse sizes can be a daunting task as it significantly impacts both Snowflake costs and query performance.

In this article, we'll cover the detailed steps you need to take to ensure your Snowflake virtual warehouse is the “right size”, striking the perfect balance between price and performance.

What is a Snowflake virtual warehouse?

Snowflake Virtual Warehouse or simply referred to as “warehouse”, is a cluster of computational resources used for running queries and tasks within the Snowflake platform. It functions as an on-demand resource, separate from any data storage system, and is similar to a virtual machine (VM).

One of the key features of Snowflake's Virtual Warehouse is the separation of storage and compute infrastructure, meaning that the compute resources utilized for analytics query processing are not tied to the data loading characteristics or overall storage volume of a given instance.

The Snowflake Virtual Warehouse comprises compute nodes that work together to provide increased computational power. The number of nodes available for a query doubles with each increase in warehouse size, starting with only one node for an X-Small warehouse and scaling up to 512 nodes for a 6X-Large warehouse. This doubling of processing power with each unit increase in size halves the elapsed query processing time every time the warehouse size is scaled up.

For instance, a Medium warehouse has 4 nodes in Snowflake, while a Large warehouse has 8 nodes. When a query is executed on a warehouse, Snowflake may utilize as many nodes as are available in parallel to execute the query. This means that a Large warehouse (8 nodes) will complete the same task twice as fast as a Medium warehouse (4 nodes).

Snowflake offers two types of virtual warehouses: the flexible SnowStandard and the Snowpark-optimized Warehouses.

The Standard virtual warehouses come in sizes ranging from X-SMALL to 6X-Large. On the other hand, the SnowPark-optimized virtual warehouse also comes in sizes ranging from X-SMALL to 6X-Large.

Note: Snowpark-optimized warehouses are not supported on X-Small or SMALL warehouse sizes.

SnowPark-optimized virtual warehouse is designed for high memory requirements, such as ML workloads, ML training datasets, and other memory-intensive tasks. It provides 16 times more memory per node than a standard virtual warehouse, making it an excellent choice for handling such workloads on a single virtual warehouse.

Want to take Chaos Genius for a spin?

It takes less than 5 minutes.

How do cost & performance change as Snowflake warehouse sizes increases?

The size of a Snowflake virtual warehouse affects both cost and performance. All warehouses, regardless of size, are charged based on the amount of time they are running, whether actively processing queries or waiting for one to be issued. The hourly costs—measured in Snowflake credits—are also doubled with every increase in warehouse size.

Snowflake uses t-shirt sizing names for their warehouses. The available sizes range from X-SMALL to 6X-Large, and for most Snowflake users, the X-SMALL warehouse is sufficient, providing ample power to effectively handle massive datasets, depending on the complexity of the workload.

As previously stated, there are two types of virtual warehouses: SnowStandard and Snowpark-optimized, and each type charges credits differently.

Knowing how Snowflake's resources are billed is crucial to understanding how to use the platform. While we'll touch on some of the essential aspects briefly, for a thorough explanation of Snowflake credit costs and ways to decrease the Snowflake costs, we recommend reading our two articles: 8 Ways to Decrease Snowflake Costs & 4 Best Snowflake Cost Estimation Tools

For standard virtual warehouses, the credit/hour table looks like this:

For Snowpark-optimized virtual warehouses, the credit/hour table looks like this:

Note: Snowflake charges a minimum of 60 seconds per query on a running or resized warehouse. Even if a query runs for only a few seconds, the user will be charged for a full minute of usage. Also, Snowflake offers serverless compute and cloud service compute, which have different credit structures. Check this our article to learn more about it.

As for performance, increasing the warehouse size can significantly reduce processing time for complex queries using large tables and/or multiple joins. This is because the most computationally heavy operations—TableScans, ExternalScans and Joins—benefit from the additional working memory that comes with larger warehouse sizes. However, if a query's results have a lot of uneven/skewed values, increasing the warehouse size might not improve performance as much as expected.

Finally, now that we know what a Snowflake virtual warehouse is and how much it costs, it's time to get into the article's core. Let's review how to "right-size" your virtual warehouse for optimal Snowflake performance.

Steps to Effectively Right-Size Your Snowflake Virtual Warehouse

Selecting the right warehouse size in Snowflake can significantly impact performance, potentially saving hours of waiting for failed queries and quickly delivering results for complex queries. To find the best warehouse size, it's crucial to regularly perform tests that help identify the right size. Whether you're setting up a new warehouse or adjusting the size of an existing one, following these steps can help you find the optimal size for your needs.

1) Start Small and Scale Up as Needed

To effectively Right-Size Your Snowflake Virtual Warehouse, it's essential to start small and gradually increase the size until you find the sweet spot of maximum performance at the lowest cost.

Start with the X-SMALL warehouse and run workloads. If queries are slow, increase the size incrementally until performance is satisfactory. Remember that each size increase doubles the cost, so ensure queries are at least 2x faster. X-SMALL or SMALL warehouses may suffice for small-scale operations, while larger sizes (X-LARGE to 6X-LARGE) are suitable for bulk loading and heavy calculations in large-scale environments.

Here are a few essential points to consider in order to create the optimal balance between cost and performance:

- Start with an X-SMALL warehouse and gradually increase the size until the query duration stops halving.

- Choose a Snowflake warehouse sizes that offers the best cost-to-performance ratio, usually one smaller than the largest warehouse that fully utilizes the query.

- If increasing the Snowflake warehouse sizes only results in a small decrease in query time, sticking with the smaller warehouse may be more cost-effective. But, if faster performance is necessary, a larger Snowflake warehouse sizes can be selected, but returns may start diminishing.

2) Automate Warehouse Suspension + Resumption

Selecting the initial Snowflake warehouse sizes is important, but Snowflake offers a solution to automate warehouse suspension and resumption, which provides more flexibility to start with a larger size and adjust the size based on workloads.

By setting up automatic warehouse suspension, warehouses can be configured to auto-suspend after no activity (default is 10 minutes). To strike a balance between performance and cost, it is suggested to use a 60-second auto-suspend instead of the default 600 seconds.

This approach is useful as Snowflake charges credits based on when warehouses run, not when processing requests. Hence, automatic warehouse suspension prevents warehouses from consuming credits when they are not being used. Note that when a warehouse suspends, its local cache gets cleared. Therefore, if there are repeating queries that scan the same tables, setting the warehouse auto-suspend too small will lead to a drop in performance.

On the other hand, auto-resume gives you more control over costs and access to warehouses. If you prefer manual control over when your warehouse resumes processing, you can disable auto-resume. Otherwise, the warehouse will spin back up whenever new queries are submitted.

Automating warehouse suspension and resumption can effectively manage costs and ensure that resources are only consumed when necessary. This approach provides more flexibility to businesses/users that have unpredictable workloads or need to adjust Snowflake warehouse sizes frequently.

3) Check if Your Snowflake Warehouse is Under or Over Provisioned

Choosing the appropriate Snowflake warehouse sizes in Snowflake is essential for determining whether the warehouse is under or over-provisioned. This evaluation assists in optimizing resource allocation, controlling costs, and ensuring efficient query performance.

Is it under-provisioned?

An under-provisioned Snowflake warehouse may not have sufficient resources to handle the workload, leading to sluggish query performance and potential bottlenecks. This can negatively impact the user experience and hinder the ability to process large volumes of data in a timely manner. To identify under-provisioning, monitor performance indicators such as query execution time, queue time, and the number of queued queries. If these metrics consistently show poor performance, increasing the warehouse size to allocate more resources may be necessary.

Is it over-provisioned?

An over-provisioned Snowflake warehouse may have more resources than required, resulting in unnecessary costs without providing any significant performance improvements. To identify over-provisioning, analyze the warehouse's resource utilization, such as CPU and memory usage. If these metrics consistently show low utilization, it may be more cost-effective to reduce the warehouse size.

4) Monitor Disk spillage

It's crucial to monitor both local and remote disk spillage. In Snowflake, when a warehouse cannot fit an operation in memory, it starts spilling data first to the local disk of a warehouse node, and then to remote storage. This process, called disk spilling, leads to decreased performance and can be seen in the query profile as "Bytes spilled to local/remote storage." When the amount of spilled data is significant, it can cause noticeable degradation in warehouse performance.

To decrease the impact of spilling, the following steps can be taken:

- Increase the size of the warehouse, which provides more memory and local disk space.

- Review the query for optimization, especially if it's new query.

- Reduce the amount of data processed, such as improving partition pruning or projecting only the needed columns.

- Decrease the number of parallel queries running in the warehouse.

5) Determine Optimal Costs and Performance

Optimizing the cost and performance of Snowflake virtual warehouses is critical. The warehouse's size plays a significant role in the speed of CPU-bound queries. Increasing the size of the Snowflake warehouse will boost query speed until the resources are fully utilized. Beyond this point, larger Snowflake warehouse sizes will not enhance performance, and costs can rise without any improvement in query speed/performance.

To achieve the optimal balance between performance and cost, start with an X-SMALL warehouse and gradually scale it up until the query duration stops halving. This indicates that the warehouse resources are fully utilized and helps you identify the sweet spot of maximum performance at the lowest cost. Also, analyze the historical usage patterns of your Snowflake warehouse to identify any usage patterns or spikes in resource utilization that may indicate an incorrectly sized warehouse. By doing so, you can adjust the Snowflake warehouse size to align with your workload demands and ensure optimal performance.

6) Slow or Frequently Timing-Out Queries

If you notice that your queries are consistently slow or frequently timing out, it could indicate that the Snowflake warehouse size is too small for the workload. Sluggish query performance can indicate that the warehouse lacks sufficient resources to handle the workload efficiently. Consider monitoring the query durations and examining whether increasing the warehouse size improves query speed.

7) Reviewing Snowflake Query History for Errors

Examining the Snowflake Query History can provide valuable insights into any errors related to Snowflake warehouse sizes. Look out for error messages such as "Warehouse full" or "Insufficient credit", which can indicate that the warehouse is unable to accommodate the query workload. Identifying and addressing these errors ensures that the Snowflake warehouse size aligns with your workload demands.

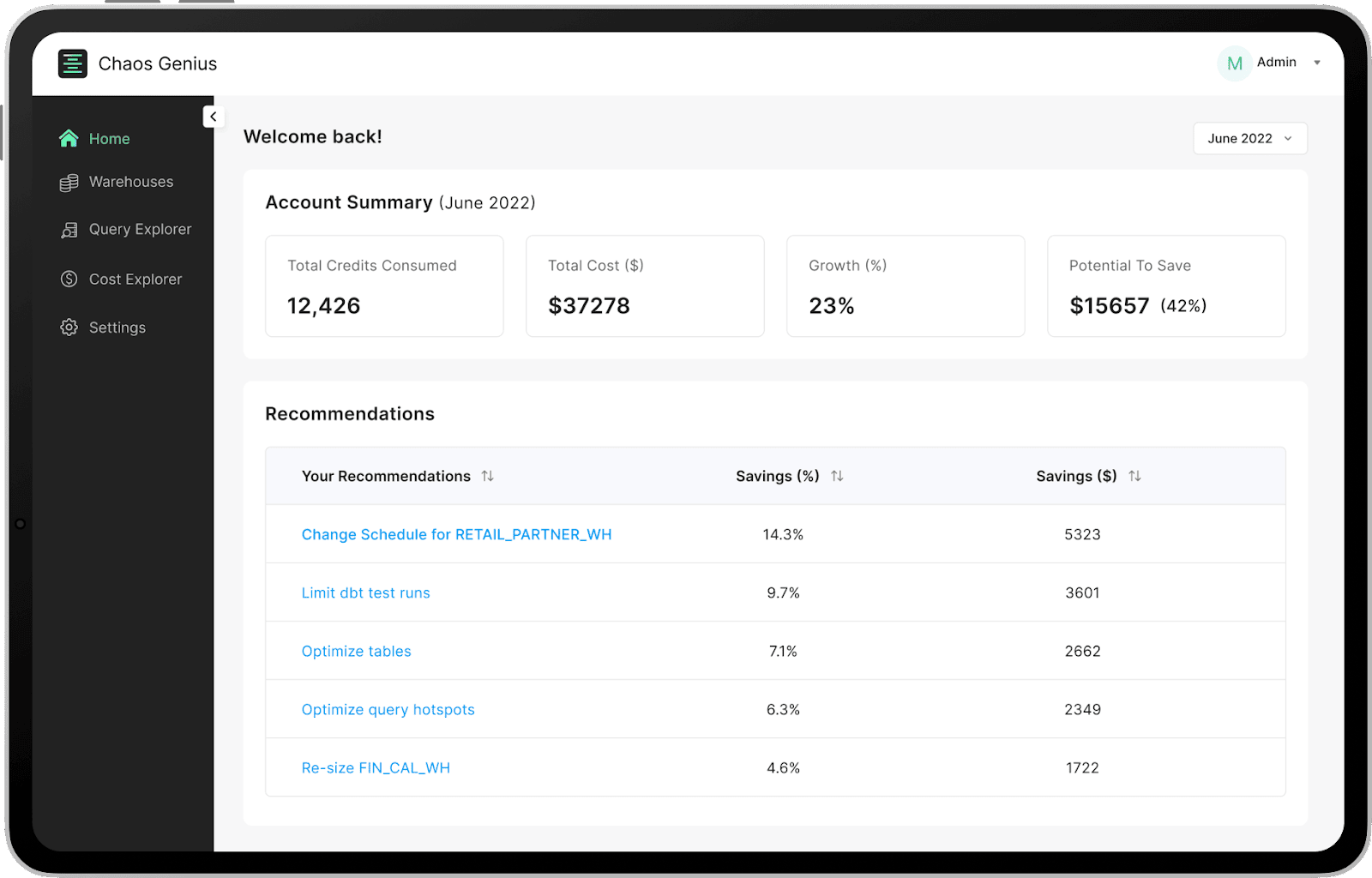

8) Using Snowflake Observability Tools (Chaos Genius)

Snowflake’s Resource monitoring is an important step in reducing Snowflake costs, but it may not provide the level of detail needed to make an informed decision. That's exactly where Snowflake observability tools like Chaos Genius come into play!!

Chaos Genius uses advanced analytics and machine learning to monitor Snowflake virtual warehouse in real-time, providing automated recommendations to improve warehouse utilization and recommendations on how to right-size them. The tool also offers a dashboard with detailed metrics and insights to quickly identify any potential issues, enabling users to optimize the Snowflake usage, reduce Snowflake costs and improve the overall Snowflake performance.

Don't miss the opportunity to reduce Snowflake costs and transform your business. Schedule a demo with us right now!

Save up to 30% on your Snowflake spend in a few minutes!

Conclusion

Snowflake virtual warehouse is a game-changer for businesses seeking to store, process, and analyze large amounts of data in real-time. Its scalability and affordability make it a popular choice for organizations striving to stay ahead of the data game. Yet, deciding on the right size and structure for a Snowflake Virtual Warehouse can be a daunting task. In this article, we've delved into the nitty-gritty details of Snowflake's virtual warehouse, including how its credits are charged.

Here is a summary of what we covered in the steps needed to right-size the Snowflake warehouse:

- Start with a small Snowflake warehouse size and gradually scale up to find the sweet spot of maximum performance at the lowest cost.

- Automate warehouse suspension and resumption to manage costs and optimize performance.

- Check if Your Snowflake Warehouse is under or over Provisioned

- Monitor Disk spillage

- Determine the optimal costs and performance based on workload and usage patterns.

- Review Snowflake Query History for errors related to warehouse size.

- Use Snowflake observability tools like Chaos Genius.

So, by following the steps outlined in this article and carefully considering Snowflake warehouse sizes, you can optimize your Snowflake virtual warehouse for maximum cost-effectiveness and Optimal query performance.

Ultimately, it's all about finding the Goldilocks solution—not too big, not too small, but just right. So, take the time to get to know your data and query usage patterns, and don't be afraid to experiment a little.

FAQs

How many compute nodes are there in each Snowflake warehouse size?

Snowflake warehouses are composed of compute nodes. The X-Small warehouse has 1 node, the Small warehouse has 2 nodes, the Medium warehouse has 4 nodes, and so on. Each node has 8 cores/threads, irrespective of the cloud provider.

Can a Snowflake warehouse run multiple queries simultaneously?

Yes, a Snowflake warehouse can handle multiple queries concurrently.

How can I determine the best Snowflake warehouse size for my query?

Start with the X-Small warehouse and increase the size until the query duration no longer halves, indicating that the warehouse is not fully utilized. To find the best cost-to-performance ratio, choose a warehouse size one step smaller than the maximum.

What virtual warehouse sizes are available in Snowflake?

Snowflake offers virtual warehouses in various sizes, ranging from X-Small to 6X-Large. Each size represents a doubling of resources and credit consumption compared to the previous size.

How should I approach finding the right Snowflake warehouse size?

Experiment and iterate by starting small and gradually scaling up. Regularly test and monitor performance indicators to identify the warehouse size that delivers the best balance between cost and performance.